AI Filmmaking Explained: How Runway, Midjourney and ElevenLabs Are Changing Movies

Three AI tools that changed what filmmaking means in 2025

A few years ago, making a film meant a crew, a budget, and months of work. In 2025, one person with a laptop made a full short film using only AI tools. It was called The New Machine Cinema. People in the film industry watched it and didn’t quite know what to say.

Not because it was perfect. Because it proved something was now possible.

The tools that made it happen are available to anyone. You don’t need a film school background or a production company to use them. You need to understand what each one does and why it matters.

This is that explanation.

Tool 01: Runway

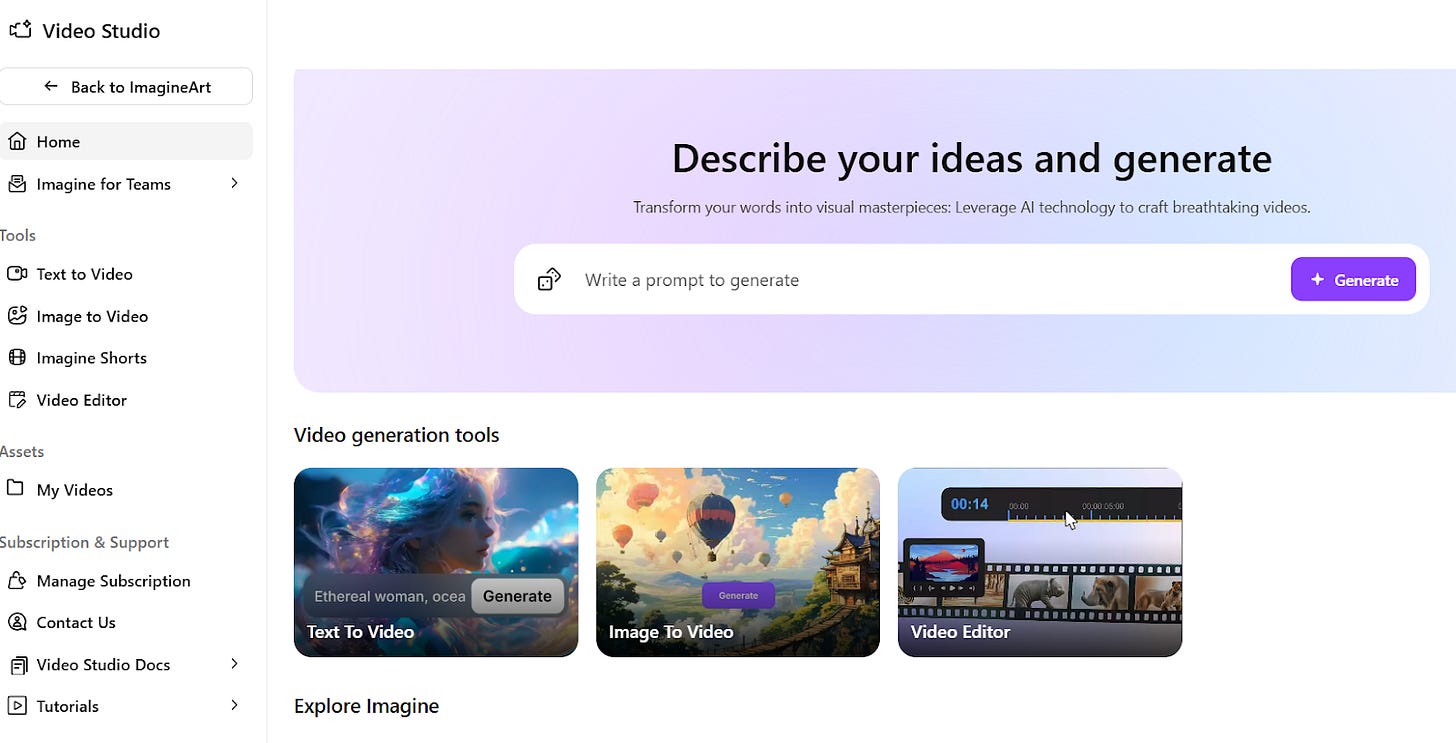

Generative video and AI editing

Runway is where video gets made or transformed. You give it a text description or an image, and it generates footage. Cinematic tracking shots. Slow motion. Aerial views. Visual sequences that would normally require a camera crew and location.

It also works on footage you already have. It can analyze your clips, suggest edits, and automate color grading. Think of it as a post-production team that works instantly and costs a fraction of the traditional price.

For independent filmmakers, this is the biggest shift. You no longer need to hire for every step of the process. Runway handles what used to take a department.

Type what you want to see. Watch it appear. That’s Runway AI filmmaking in one sentence.

Tool 02: Midjourney

Visual ideation and storyboarding

Before a single second of video exists, you need to know what your film looks like. That used to mean hiring a storyboard artist and waiting days for sketches. Midjourney collapsed that timeline entirely.

You describe a scene and Midjourney generates it as a detailed image. You can explore dozens of visual directions in an afternoon. Lighting. Character design. Color palette. Mood. You arrive at production with a complete visual document already built.

The practical result is that Midjourney storyboarding gives any filmmaker a visual language they can work from, share with collaborators, or use to pitch an idea. It turns abstract creative thinking into something you can actually see.

This is the tool that changed pre-production. The ideas come faster. The decisions happen earlier. Less guessing, less waste.

Tool 03: ElevenLabs

Voice synthesis and multilingual dubbing

Voice is one of the hardest parts of low-budget filmmaking. Recording actors, booking studios, managing reshoots when dialogue changes. ElevenLabs removes most of that friction.

ElevenLabs voice AI generates human-sounding speech from text. You can create a character voice, adjust its tone and emotion, and produce dialogue without a recording session. For multilingual releases, it can take an existing voice and redub it across dozens of languages while keeping the original emotional quality intact.

The practical applications are wide. Prototype your film’s dialogue before casting. Finish a scene when an actor isn’t available. Release your film in other languages without rebuilding the audio from scratch.

This is not a voice effect. The quality has reached the point where most listeners cannot tell the difference in a production context.

What This Means

These three tools work as a pipeline. Midjourney shapes your vision before production. Runway builds and edits the visual content. ElevenLabs handles the voice layer. Together they cover pre-production, production, and post-production.

A filmmaker working with all three can do in days what once took months. That is the real change. Not that AI makes films, but that AI gives one person the reach of an entire team.

Films like Window Seat and DreadClub: Vampire’s Verdict showed this in practice. Both were made with minimal crews and significant AI assistance. Both demonstrated that the tools can support genuine storytelling, not just technical demos.

The Real Questions

Knowing the tools means knowing their complications too.

Ownership is unsettled. When an AI generates a visual or a voice, the question of who holds the rights to that output is still being worked out in courts and contracts. If you are building something commercial, you need to stay current on platform terms.

Displacement is real. The people whose skills overlap most with what these tools can do, concept artists, storyboard illustrators, ADR technicians, are facing genuine disruption. Using the tools well means being honest about that.

And deepfakes are the shadow side of voice and image synthesis. The same capability that lets you prototype dialogue can be used to put words in someone’s mouth. The technology is neutral. How it gets used is not.

The filmmakers who will define the next decade are the ones learning these tools now. Not to replace craft, but to extend it. The camera changed what stories could look like. Editing changed how they could move. AI is changing who gets to make them.

You do not need permission anymore. You need to understand the tools. That is what The New Machine Cinema era actually means. The barrier was never talent. It was accessible. These tools are changing what access looks like.

Cinema has always been shaped by its tools. AI is the next evolution of that language.

Subscribe for more practical guides to AI & creativity