Claude Code: A Practical Guide to Agentic "Vibe Coding" from the Terminal

What happens when you stop writing code and start directing it.

There is a quiet but meaningful shift happening in how software gets built.

For most of programming’s history, writing code meant a particular kind of solitary focus. A developer, alone with a problem, translating intent into syntax one line at a time.

That model is changing.

Today, a growing number of engineers and product professionals describe their workflow differently. They are not writing code so much as directing it. They articulate intent in plain language, review what gets produced, steer and correct, and move forward.

The cognitive energy that once went into syntax and boilerplate now goes toward architecture, judgment, and product thinking.

This is what people are calling agentic vibe coding. And Claude Code, Anthropic’s terminal-based agentic tool, is one of the most capable instruments for doing it.

This article is a practical guide to what it is, how it works, and why it matters for senior product and AI professionals.

What Vibe Coding Actually Means

Simply put: you describe what you need, and an AI system handles the translation into working code.

Your role shifts from line-by-line construction to direction, review, and judgment.

In a conventional workflow, a large portion of a developer’s time goes toward work that is necessary but not intellectually distinctive. Vibe coding collapses that overhead, freeing up mental space for the decisions that actually require human expertise.

Meet Claude Code

Claude Code is not an autocomplete engine with a better vocabulary.

It reads files across your entire project, writes and modifies code, executes commands, manages git workflows, runs tests, and interacts with external tools. It understands context across multiple directories and navigates a codebase the way an experienced engineer would.

Here is what that looks like in practice.

You need to add authentication to an existing API. You open Claude Code, describe what you need, including the mechanism, the session handling, and the test coverage you want. Claude reads your codebase, understands the existing structure, makes changes across the relevant files, and surfaces only the decisions that genuinely require your input.

It is not waiting to be micromanaged. It is working, and checking in where judgment is actually required.

For senior professionals who think about leverage and compounding productivity, that distinction matters.

Why the Terminal Is the Right Home for This

The terminal is fast, direct, and composable. There is no interface loading between you and the work. Commands execute immediately. The environment is scriptable, which means Claude Code can sit inside larger automated workflows rather than existing as an isolated tool.

More importantly, proximity to the actual environment where code runs matters.

A terminal-based agent has access to the file system, the development environment, and the execution context simultaneously. It is operating in the same space a developer operates in, not simulating it from the outside.

Claude Code runs entirely in text. You type instructions, press Enter, and use /help to see available commands. For experienced professionals, the interface feels minimal in a deliberate way.

What an Agentic Session Looks Like

Here is a concrete example worth walking through.

You are working on a Python API returning 500 errors on a specific endpoint. You open Claude Code and describe the problem: the endpoint, the error, and any relevant context.

Claude does not look only at the file you mentioned. It reads your route definitions, traces through the middleware layer, checks the database connection logic, and follows the error to its source. In this case, a missing null checks three files deep in a model definition that nobody updated when the schema changed.

> The /users endpoint is returning 500 errors intermittently.

Can you diagnose and fix the issue?

Claude: I've traced the issue to db/models.py. The get_user()

function doesn't handle cases where a user ID doesn't

exist. It returns None, which causes the serialiser to

fail downstream.

I'll add a proper 404 exception and update the error

handling in the route.

Would you also like a regression test for this case,

and updated API documentation to reflect the new error

response format?That last question is the agentic part. Claude is not waiting to be told each next step. It is moving through the problem and surfacing what comes next.

Current capabilities include multi-file editing, contextual git commit messages, pull request management, and experimental multi-agent collaboration where one coordinating agent delegates tasks to specialised subagents running in parallel.

The Shift in How Work Feels

This part tends to be underemphasised in technical writeups, but it is worth addressing directly.

When developers work alone on complex problems, cognitive friction accumulates. Every context switch, every debugging loop, every documentation lookup is a small tax on attention. The fatigue at the end of a hard session is less about the thinking and more about the overhead surrounding it.

Working with an agentic collaborator changes that dynamic.

The implementation friction decreases, which means mental bandwidth stays on the problems that genuinely require it: architectural decisions, product trade-offs, edge cases, the judgment calls that experienced professionals are well-positioned to make.

This matters especially for senior PMs who increasingly need to engage with technical implementation, not to write code themselves, but to evaluate feasibility in real time and build shared understanding with engineering teams. Claude Code offers a practical way to explore technical spaces without requiring deep implementation expertise at the syntax level.

Getting Started: What You Actually Need to Know

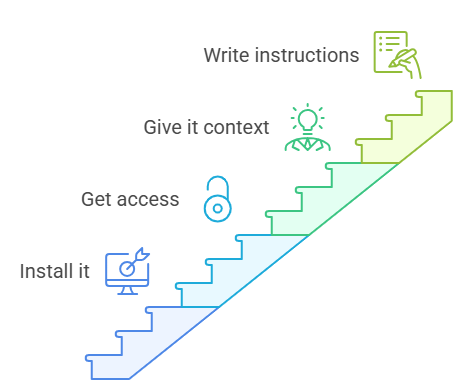

Step 1: Install it.

bash

curl -fsSL https://claude.ai/install.sh | bashWindows users can run the equivalent through PowerShell. Navigate to your project directory, run claude, and the first launch will prompt you to authenticate through a browser window.

Step 2: Get access.

Claude Code requires a paid Anthropic subscription, either Claude Pro or Max, or an Anthropic Console account with billing enabled. For regular use, the Max subscription is generally more economical than pay-per-token API access.

Step 3: Give it context.

Create a CLAUDE.md file at the root of your project. Claude Code reads this automatically at the start of every session, giving it persistent context about your codebase conventions, architecture decisions, and preferences. This single step eliminates the need to re-establish context every time you open a session.

Step 4: Write instructions that focus on outcomes.

You do not need technical precision. Describe what you want to achieve and what constraints matter.

“Refactor this service to be more testable” works. “Add error handling that will be easy to extend later” works.

Claude will ask clarifying questions when the path forward is genuinely ambiguous. Let it.

If something feels broken at any point, run claude doctor. It diagnoses most common configuration issues automatically.

What It Cannot Do Yet

Honesty matters here.

Claude Code makes mistakes, sometimes confidently, and outputs should be reviewed rather than accepted wholesale. It has no persistent memory between sessions unless you provide context explicitly. Decisions that depend on undocumented organisational context, business logic that exists only in someone’s head, or the institutional history behind a particular technical decision remain firmly in the human domain.

It also operates with real access to your file system and terminal. Treat it as a highly capable collaborator who requires clear direction and thoughtful oversight, not an autonomous system you can hand something to and disengage from.

Cost is worth factoring in too. Heavy usage in a complex codebase accumulates. Understanding your usage patterns before fully committing to a workflow is a reasonable first step.

Where This Is Heading

The experimental multi-agent capability points toward something worth thinking about now.

A coordinating agent delegates work to specialised subagents running in parallel. One handles backend changes. Another writes tests. A third updates documentation. A fourth prepares the pull request. The human in the loop reviews, approves, and redirects.

For product organisations, this suggests a future where the distance between idea and working prototype compresses significantly. Where product thinking and technical implementation operate in much tighter feedback loops than they currently do.

The professionals who build fluency with these tools early will have a compounding advantage. Not because they are writing more code, but because they are getting better at directing the right code to be written.

Start Small, But Start

Install Claude Code. Navigate to a project you already know. Begin with something small.

Ask it to explain a confusing function. Debug a minor issue. Document a module.

The first session will feel unfamiliar. The second will feel natural. By the third, you will find yourself reaching for it the way you reach for any tool that has genuinely earned its place in your workflow.

Agentic coding is not about removing human expertise from the process. It is about redirecting that expertise toward the decisions that genuinely require it.

For senior professionals who have spent years developing judgment about products, systems, and teams, that is a meaningful shift in where your capabilities get applied.

The tools are ready.

Found this useful? Have questions or want to go deeper on any of this? Comment

Subscribe for more such insightful reads

The cross-session memory limitation is the one that trips people up most. You get deep into a complex refactor, the agent does brilliant multi-file work, and then next session you're starting fresh. CLAUDE.md files help here. The agent inherits project context from them without re-explaining everything each time. The confident mistakes point is underrated too. The failures are rarely dumb. They're plausible, well-reasoned, and sometimes seductive. You have to stay in the loop to avoid approving work you don't actually understand. The cognitive shift isn't just AI writes code now. It's learning when to trust and when to verify. That turns out to be a skill of its own.