Multi-Agent AI Systems: How They Work & Why They’re the Future of Intelligence

Multi-agent systems aren’t a feature upgrade. They are a new species of intelligence.

GPT-4 isn’t the destination - it’s a dead end.

The next breakthrough in AI won’t be one giant model…

It will be thousands of intelligent agents cooperating to solve problems no single model ever could.

Multi-agent systems aren’t a feature upgrade.

They are a new species of intelligence.

Multi-agent AI is no longer a research paper fantasy.

It is the direction AI is actually moving - quietly, inevitably, and faster than most people realize.

Today’s LLMs behave like powerful autocomplete engines:

smart, fluent, impressive - but fundamentally isolated.

Tomorrow’s AI systems will behave like societies of intelligent agents working together, reasoning together, negotiating together, and solving problems no single model ever could.

If you want to understand where AI is heading in the next 3 years, you must understand multi-agent systems.

Because the future of intelligence won’t be bigger models.

It will be many models, working as one.

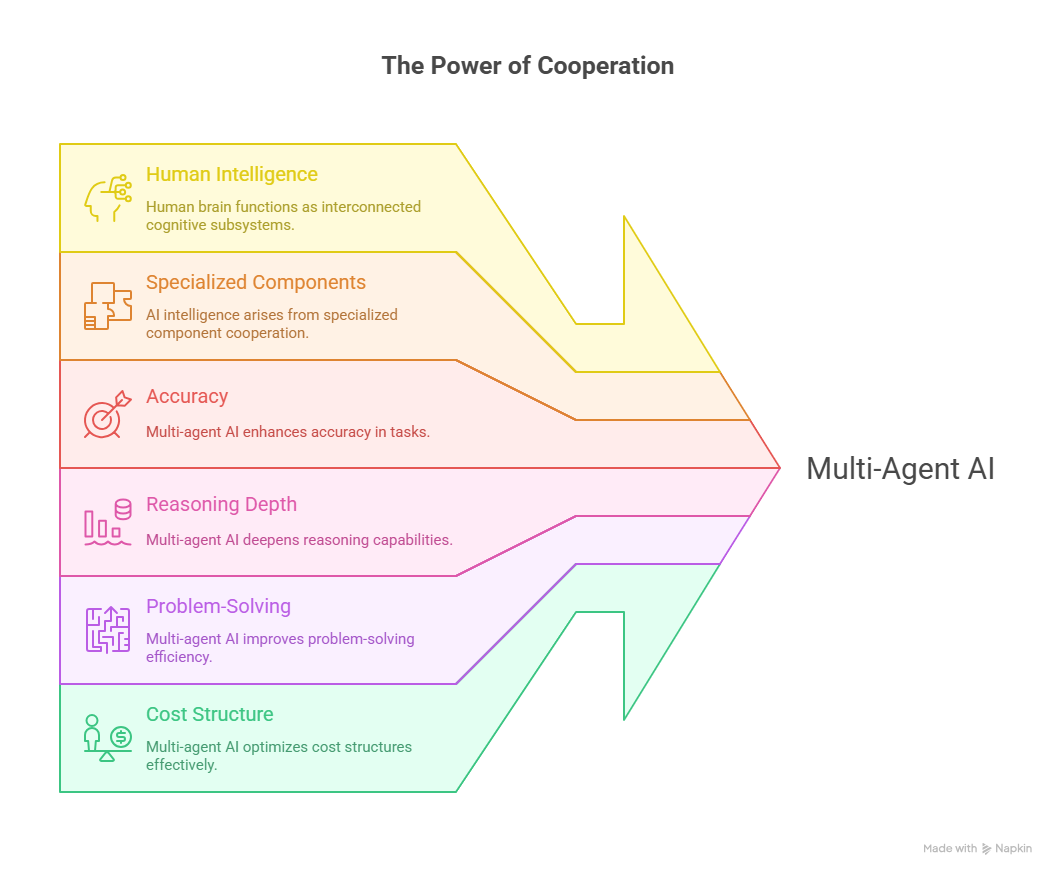

Why Multi-Agent Matters

Look at human intelligence:

We aren’t one brain.

We are thousands of cognitive subsystems exchanging signals - memory, planning, language, motor control, perception.

AI will mirror the same pattern:

intelligence emerges not from a single giant model, but from cooperation between specialized components.

That shift changes everything:

accuracy

reasoning depth

problem-solving

cost structure

safety

and autonomy

Multi-agent AI is the next evolutionary leap.

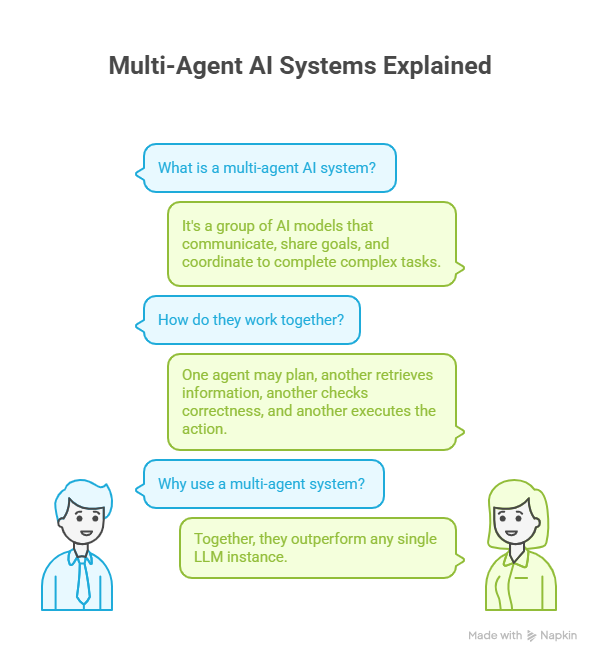

What Is a Multi-Agent AI System?

A multi-agent AI system is a group of AI models or agents that:

have unique skills or roles

communicate with each other

share goals

and coordinate to complete complex tasks

One agent may:

plan,

another retrieves information,

another checks correctness,

another executes the action.

Together - they outperform any single LLM instance.

Why Single LLMs Are Not Enough

Today’s LLMs show four major limitations:

LLM Limitation

Multi-Agent Fix

Hallucinations

Peer verification

Shallow reasoning

Specialist decomposition

Cost inefficiency

Role-based routing

Fragile prompts

Dynamic correction loops

Multi-agent solves structural limits - not cosmetic issues.

Why Multi-Agent AI Systems Work Better

Multi-agent systems combine:

1 Division of labor

Just like people, AI agents specialize.

Planner > Retriever > Solver > Validator.

2 Feedback loops

Agents critique each other - better outputs.

3 Emergent behaviour

Capabilities not programmed - appear naturally.

4 Redundancy & reliability

One agent fails? Another corrects it.

This is why companies like:

OpenAI, Anthropic, DeepMind, Microsoft, Google, Meta

are all building agent frameworks right now.

Why Multi-Agent Systems = The Next “Moore’s Law” of Reasoning

For 10 years, AI improved by scaling model size:

More parameters = more intelligence.

But we are reaching limits:

compute cost

inference cost

latency

training complexity

The next scaling curve is:

multi-agent cooperation - exponential reasoning ability.

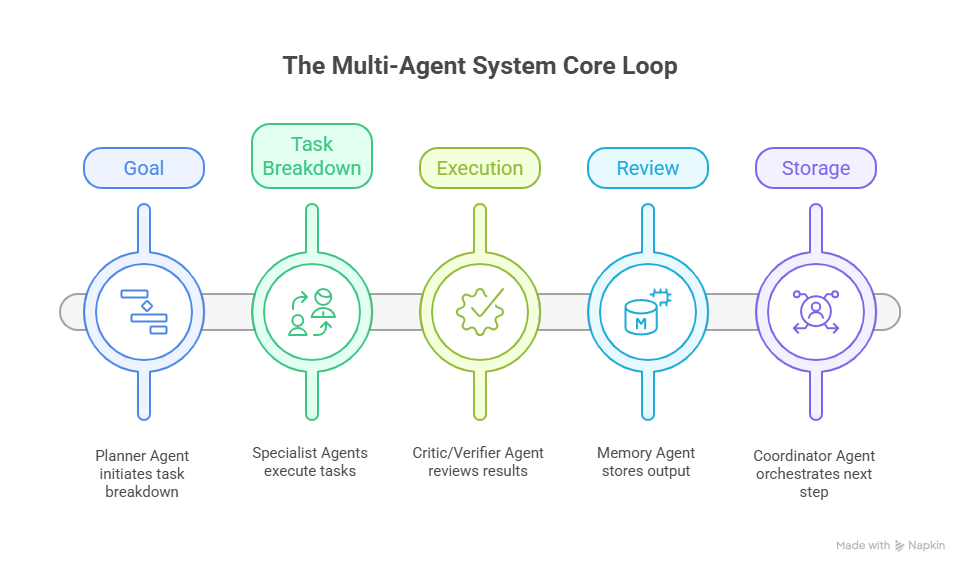

How Multi-Agent Systems Actually Work (Core Loop)

Goal → Planner Agent → Task Breakdown

↓

Specialist Agents Execute

↓

Critic/Verifier Agent Reviews

↓

Memory Agent Stores Output

↓

Coordinator Agent Orchestrates Next Step

This loop continues until completion.

Think of it like the human brain’s cortex - different regions solving different sub-problems.

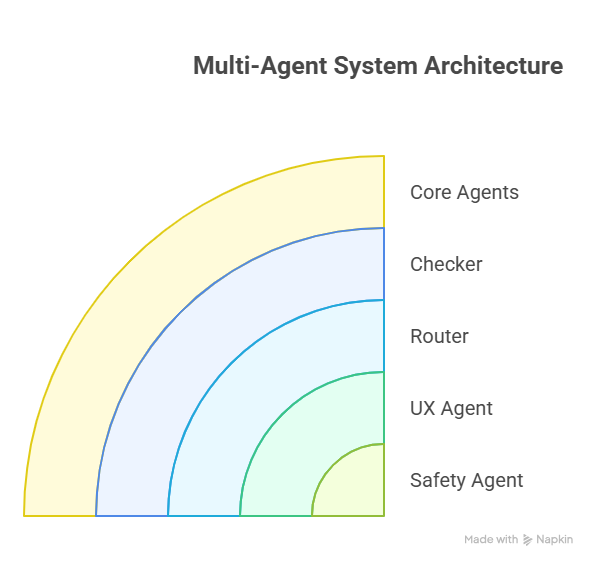

Example Architecture of a Multi-Agent System:

Agent Role

Function

Planner

Defines steps + strategy

Researcher

Finds information

Solver

Produces answers

Checker

Validates correctness

Memory

Stores knowledge

Router

Assigns tasks

Safety Agent

Screens outputs

UX Agent

Translates for humans

This looks like a workforce - not a prompt.

Real Use Cases Already Emerging

1 Deep research automation

Multi-agent research teams outperform humans in:

literature review

academic scanning

summarization

citation tracing

2 Autonomous coding

Two agents = code generation

Third = debugging

Fourth = security scan

GitHub Copilot is the seed.

Agentic IDEs are the future.

3 Robotics and embodied AI

Agents coordinate:

vision - planning - motion - error correction.

4 Complex business workflows

Finance, law, architecture, journalism - multi-agent is already arriving.

Why Multi-Agent Systems Produce Better Reasoning

LLMs hallucinate because they reason alone.

Add a critic agent - hallucinations drop.

Add a verifier agent - truth improves.

Add retrieval agent - grounded knowledge rises.

Solo models guess.

Teams of models think.

The Science: Emergent Intelligence

Studies show multi-agent groups begin to:

invent strategies,

negotiate,

debate,

collaborate,

and discover solutions nobody expected.

That is emergence

real sparks of intelligence.

This is why Google DeepMind has started:

multi-agent diplomacy models,

multi-agent protein folding,

multi-agent negotiation research.

It works.

A Real Example: Multi-Agent Coding

Single LLM:

Writes code - 40% error rate.

Multi-Agent team:

one writes code,

second tests code,

third fixes bugs,

fourth checks logic.

Error rate drops drastically.

Why the Future Won’t Be One Giant Model

Everyone imagines:

“GPT-8 will be a god model and solve everything.”

Reality:

Future AI = ecosystems.

Networks.

Swarms.

Just like the brain:

not one neuron - billions.

The Coming Agent Stack

Soon tools will look like:

Planner → Vision Agent → Math Agent → Code Agent → Reasoning Agent → Safety Agent

Not just “give model a prompt.”

We’re moving from word processors to automated research departments.

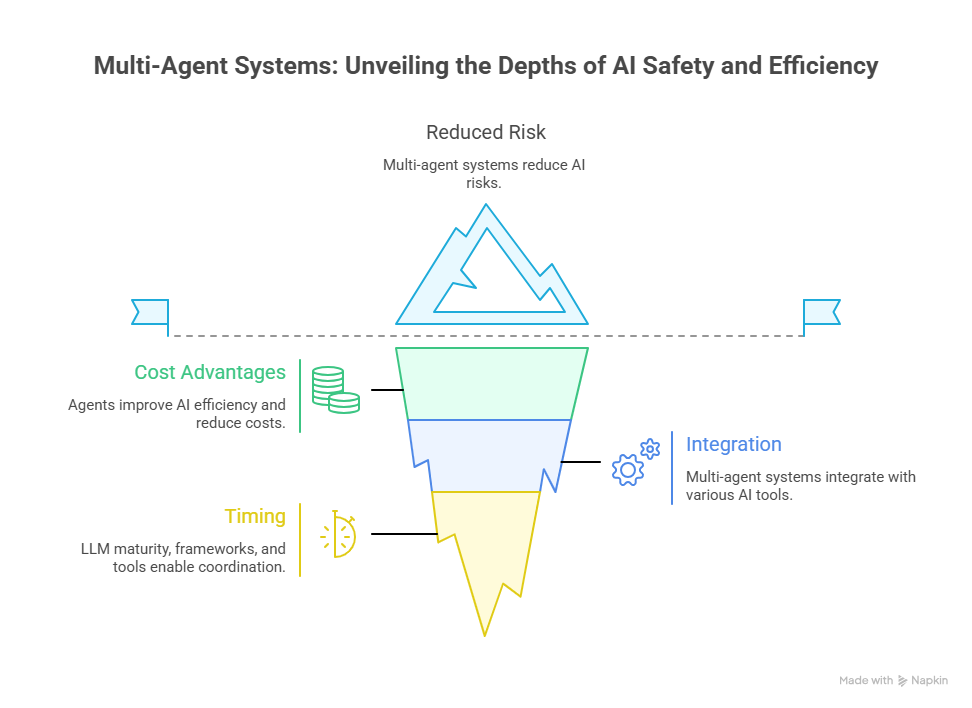

Why Multi-Agent Matters to AI Safety

Agent debate systems drastically reduce risk:

Two models debating a solution

outperform single models.

This prevents:

hallucination

bias

extremism

misinformation

Multi-agent = self-correcting AI.

Cost Advantages

Agents = efficiency.

Instead of:

GPT-4 everywhere,

You run:

cheap model for tasks

big model for reasoning

tiny model for recall

Routing solves cost bloat.

Integration With RAG, Memory & Tools

Multi-agent becomes unstoppable when combined with:

vector search

tool calling

structured memory

data caches

APIs

Each agent controls part of the pipeline - assembly line intelligence.

The Big Question: Why Now?

Three forces collided:

LLM maturity

agent frameworks

orchestration tools

This unlocked coordination at scale.

Future Outlook

By 2030, we will likely see:

autonomous research labs,

agent companies with no employees,

self-running businesses,

AGI flavours emerging from multi-agent swarms.

The winners won’t be those with the biggest models.

They’ll be those who design smarter agent societies.

Final Takeaway

Multi-agent AI systems are not a feature upgrade.

They are an intelligence paradigm shift.

LLMs today resemble talented individuals.

Multi-agent AI resembles organizations.

And history is clear:

organizations outperform individuals.

The future of AI isn’t a model.

It’s a system.

A team.

A society.

A network of intelligence woven together.

We are entering the age of agentic AI

and it will redefine what intelligence means.

The most dangerous question in the AI age sounds pragmatic:

“Where do we put the humans when AI does the work?”

It isn’t pragmatic. It’s diagnostic.

It reveals a hidden premise: humans are primarily functions—and “not being needed” becomes an existential defect.

AI doesn’t just change jobs. It relocates the bottleneck: from output to judgment—criteria formation, accountability, refusal, and the ability to stay coherent under uncertainty.

Essay here:

👉 https://open.substack.com/pub/leontsvasmansapiognosis/p/the-most-dangerous-question-in-the

— Leon Tsvasman

The most dangerous question in the AI age sounds pragmatic:

“Where do we put the humans when AI does the work?”

It isn’t pragmatic. It’s diagnostic.

It reveals a hidden premise: humans are primarily functions—and “not being needed” becomes an existential defect.

AI doesn’t just change jobs. It relocates the bottleneck: from output to judgment—criteria formation, accountability, refusal, and the ability to stay coherent under uncertainty.

Essay here:

👉 https://open.substack.com/pub/leontsvasmansapiognosis/p/the-most-dangerous-question-in-the

— Leon Tsvasman