Most Product Managers Are Learning AI Wrong - And It’s Costing Them Their Edge

Everyone's taking the same AI courses. The PMs pulling ahead are doing something completely different.

Let’s be honest about something.

Most PMs who say they’re “learning AI” are doing one of two things. They’re either watching YouTube explainers late at night with no plan to apply any of it, or they’re using ChatGPT to write PRDs and calling that AI fluency.

Neither of those things will make you a better product leader. And in a market where every company is reorganizing around AI, that gap is going to cost you.

This article is a practical guide. Real sources, real tools, a clear sequence to follow, and a way to actually bring what you learn into your org’s decisions. Less theory. More traction

Why Most PM-AI Learning Fails Before It Even Starts

The problem isn’t access to information. There is more AI content online than any one person can read in a lifetime.

The problem is sequence. PMs jump to tools before they understand concepts. They build prototypes without knowing how to translate that into a business decision. And then they wonder why none of it sticks.

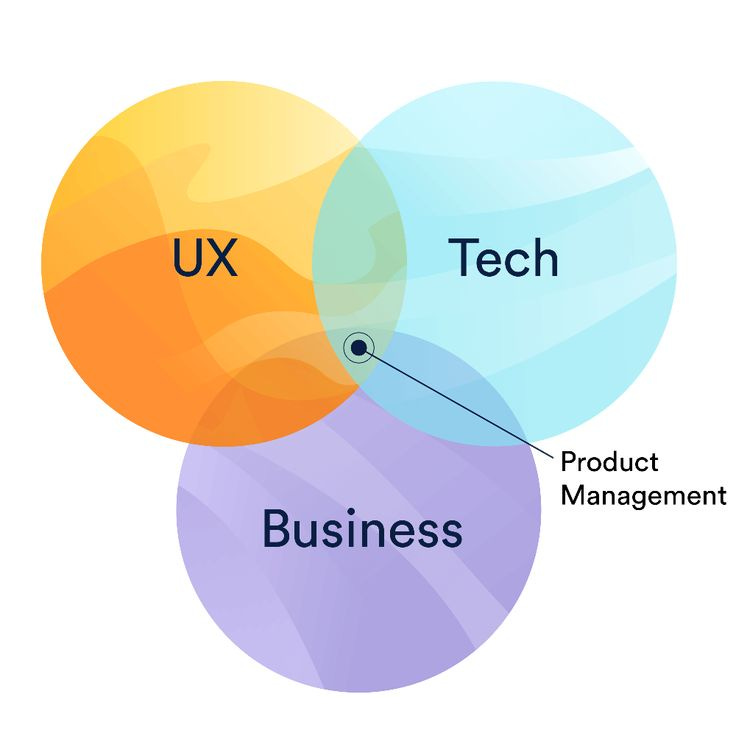

The right sequence looks like this: Concepts first. Then tools. Then organisation implementation.

That’s it. That’s the whole framework. Everything below is how you execute each layer.

Layer 1: Build the Foundation

Building a strong foundation in AI for product and business roles is not about learning how to code, it’s about developing the right mental models to understand how AI behaves in real-world scenarios. At its core, AI does not “think” or “know” anything; it identifies patterns and predicts outputs based on data. To build this foundation, you should focus on three key areas:

Understanding capabilities vs limitations:

Learn what AI is good at, such as generating text, summarizing information, and recognizing patterns and where it fails, including reasoning errors, lack of true understanding, and hallucinations. This helps you avoid overestimating what AI can do in a product.Grasping how modern AI systems work (conceptually):

You don’t need the math, but you should understand ideas like large language models, tokens, context, and training data. Knowing that AI responses are probabilistic not factual explains why outputs can vary and why consistency is a challenge.Thinking like an AI product builder:

Move beyond theory into application by learning concepts such as prompt design, fine-tuning, and retrieval-based systems. These determine how AI behaves inside a product. At the same time, understand tradeoffs like accuracy vs speed, cost vs quality, and automation vs control.

Most importantly, you need to deeply understand failure modes and how AI breaks in real user experiences. This includes situations where AI gives confident but incorrect answers, misinterprets intent, or produces inconsistent outputs. Recognizing these patterns allows you to design systems that are more reliable, add safeguards where needed, and create better user experiences.

Layer 2: Get Hands-On With Tools

Reading about AI and using AI are completely different experiences. The goal here is not to become a power user of every tool. The goal is to build intuition for what AI can reliably do, what it gets wrong, and what that means for product design.

Here are the tools worth your time, grouped by what they teach you:

For understanding language models and prompting:

ChatGPT (GPT-4o) and Claude (claude.ai) are both worth using side by side. Don’t just use one. Compare how they respond to the same prompt. Notice where they disagree, where they confabulate, where they refuse. That comparison will teach you more about model behavior than any course.

Anthropic’s Prompt Library (docs.anthropic.com/en/prompt-library) gives you real-world prompt structures across dozens of use cases. Study the patterns, not just the outputs.

For building without code:

Notion AI is the easiest place to start. Use it to summarize your user research notes, draft interview questions, and cluster feedback themes. This directly mirrors what AI-assisted discovery looks like in a real product team.

Zapier AI (Zapier Central) lets you build simple AI agents that connect tools you already use. Build one workflow: pull customer support tickets, summarize them by theme, and send a weekly digest to Slack. It takes about 45 minutes and teaches you more about AI workflow design than a full week of reading.

Perplexity AI is worth using as a research tool. It shows you what AI-assisted search feels like from a user’s perspective, which is useful if you’re building anything in the search or information retrieval space.

For understanding what AI sees in data:

julius.ai lets you upload a CSV and ask questions about it in plain English. If you’ve ever wondered how an AI analyst layer could sit on top of your product metrics, this shows you that experience directly.

The rule for this layer: every tool you try, write one paragraph about what surprised you. What did it get wrong? What did it do better than you expected? What UX decision did the product make to handle AI’s limitations? This habit builds the critical lens you need as a PM.

Layer 3: Implement It in Your Org

This is where most PMs stop, because this part is harder than consuming content. But this is also the part that actually changes your career trajectory.

Here is a clear sequence for bringing AI into your org’s thinking and decisions:

Step 1: Start with your own workflow, not your product

Before you pitch an AI feature, use AI in how you do your job. Use Claude or ChatGPT to synthesize user interview transcripts. Use Notion AI to draft your PRDs faster and spend the saved time on sharper thinking. Use Perplexity to do competitive research in a fraction of the time.

Why does this matter? Because when you have firsthand experience of where AI helps and where it gets in the way, you become a much better decision-maker about what to build for your users. You stop theorizing and start knowing.

Step 2: Run one AI-assisted research sprint

Pick your next user research cycle and change how you process the data. After your interviews, feed the transcripts (anonymized) into Claude or ChatGPT with this prompt:

“Here are transcripts from 8 user interviews. Identify the top 5 pain points mentioned, group them by theme, and flag any contradictions between what users say they want and what they describe actually doing.”

Then compare that output to your own synthesis. Where did it miss? Where did it catch something you glossed over? That exercise builds a mental model for how to use AI as a thinking partner rather than a replacement.

Step 3: Introduce an AI capability conversation into roadmap planning

The next time your team is doing roadmap prioritization, add one question to the session: “For each of these opportunities, what would it take for AI to make this 10x better? And do we have the data to do that today?”

You don’t need answers right away. You need the habit of asking. This shifts your team from treating AI as a feature to treating it as a lens on the entire product.

Step 4: Build a simple AI readiness map for your product

This is the output that makes you credible with leadership. It doesn’t have to be a fancy document. It’s a one-pager that answers:

What user problems in our product are high-frequency, language-based, or prediction-based?

What data do we currently have that a model could learn from?

What data are we missing that we’d need to collect first?

Where does AI introduce trust or accuracy risks that require UX guardrails?

When you can walk into a leadership meeting and present this, you are no longer just a PM who “knows about AI.” You are the person helping the org make a grounded bet instead of a hopeful one.

Step 5: Identify one low-stakes AI experiment to ship in the next quarter

Not a moonshot. Something small, observable, and reversible. An AI-generated summary of a long report. An AI-assisted search experience in your internal tool. A GPT-powered draft email feature for your users.

The goal is to get your team through the full loop of scoping, building, measuring, and learning on an AI feature before the stakes are high. The lessons from a small experiment are worth more than six months of strategy decks.

What To Do Now?

If you finish this article and do nothing else, do these three things this week:

Enroll in “AI for Everyone” on Coursera. It is free to audit. Do it this weekend.

Then Enroll in “AI first product management fellowship“ on Product space for a better learning experience.

Subscribe to Aakash Gupta’s newsletter & AI Space. Read every issue for the next 30 days.

Build one Zapier AI workflow using data from your actual job. Any workflow. It doesn’t matter if it’s useful. What matters is that you build it.

AI fluency for PMs is not a destination. It is a practice. The PMs who win in the next five years won’t be the ones who read the most about AI. They’ll be the ones who built the habit of applying it, learning from it, and making better decisions because of it.

Start this week. Not next quarter.

If this was useful, share it with one PM on your team who’s been meaning to “get into AI” but hasn’t started yet. That’s who this was written for.

Hit reply and tell me: what’s the one thing in your product you most want to use AI to improve? I read every response.

The sequence is what most people get wrong. They jump to tools before understanding concepts, then wonder why nothing sticks. Concepts first, then tools, then org implementation. That order matters more than which tool you pick.